MiniMax-M2.7 Open-Source Model

Github

A 230B-Parameter Self-Evolving Agent Model

Core Features

MiniMax-M2.7 is a 230-billion parameter text-to-text AI model launched by MiniMax, featuring an innovative self-evolving architecture. It can achieve self-iteration and optimization through the Agent Harness software infrastructure. This model is open-sourced on GitHub, allowing developers to access and use it.

Key Advantages

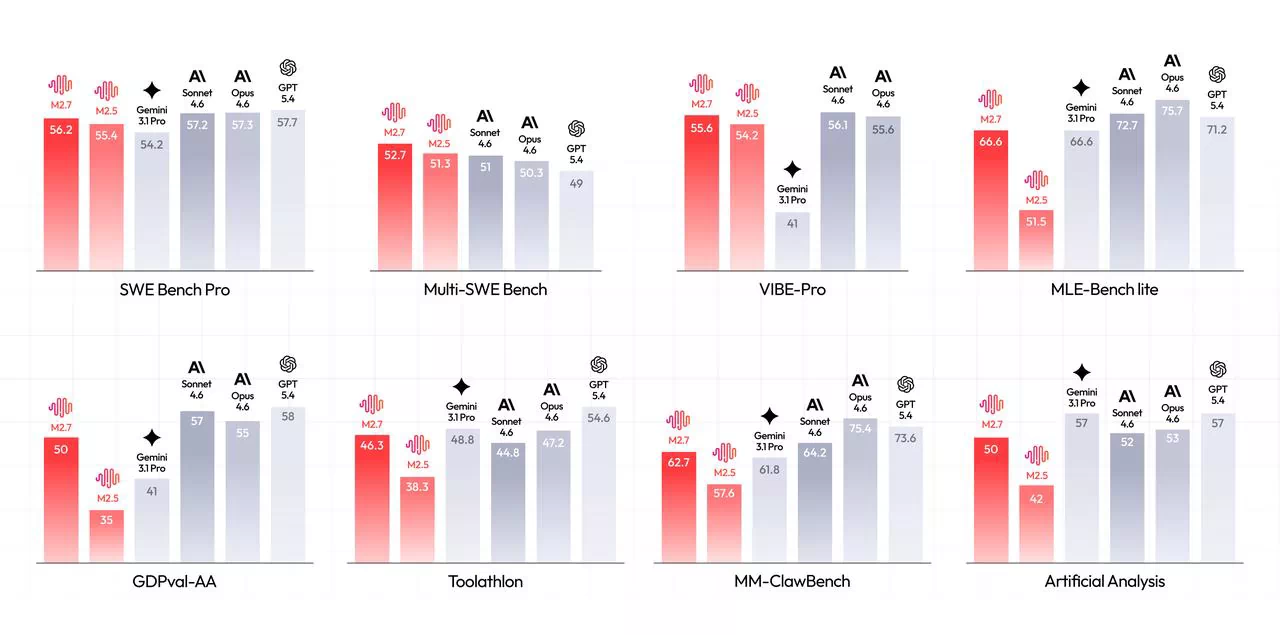

- Outstanding Performance: Achieves a 56.22% accuracy rate on the SWE-bench Pro benchmark, approaching the performance of top-tier closed-source models.

- Efficient Parameter Utilization: Reaches Tier-1 level performance using only 10 billion active parameters, offering exceptional cost-effectiveness.

- Powerful Tool Calling Capability: Specifically optimized Agent and tool calling functions, supporting the execution of complex tasks.

- Multi-Domain Application: Excels in various fields including programming, reasoning, and office tasks.

Application Scenarios

- Code Generation and Debugging: Handles real-world programming issues in GitHub repositories, supporting code completion and bug fixing.

- Agent Development: Builds AI agent applications based on tool calling.

- Office Automation: Office tasks such as document processing, data analysis, and report generation.

- Reasoning and Problem-Solving: Complex logical reasoning and mathematical problem-solving.

Technical Characteristics

- Model Architecture: A large-scale language model with 230B parameters, utilizing a Mixture of Experts (MoE) design.

- Deployment Options: Supports various deployment solutions including NVIDIA NIM, Ollama, and vLLM.

- Open-Source Ecosystem: Provides model weights and configurations on platforms like GitHub, Hugging Face, and ModelScope.

- API Service: Offers commercial API services through the MiniMax Open Platform at competitive prices.