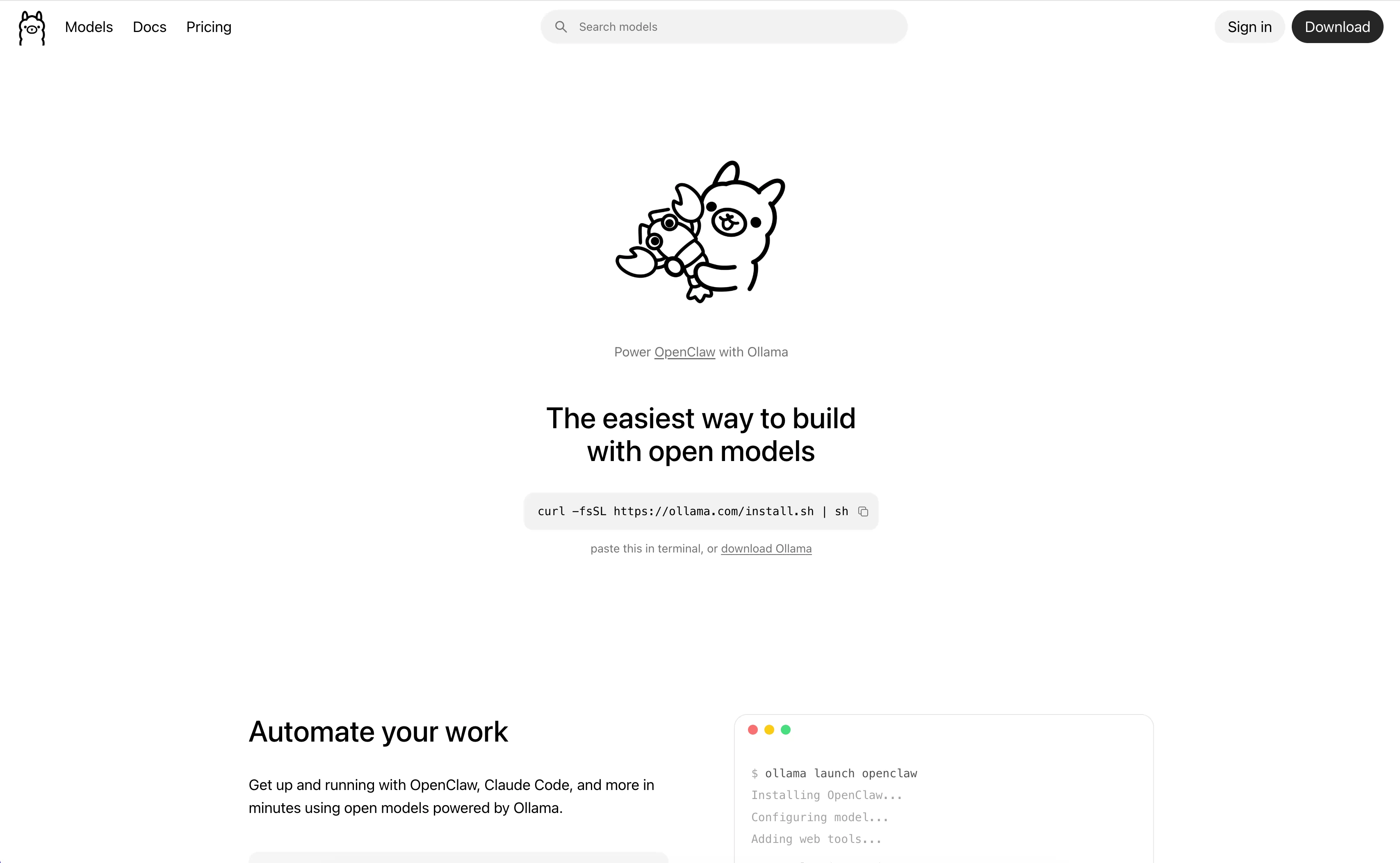

Ollama - Local LLM Platform

AI Tools

One-click deployment, run open-source large language models locally

Core Features

Ollama is an open-source tool specifically designed for conveniently deploying and running large language models (LLMs) on local machines. Through a simple command-line interface, it enables users to effortlessly run various open-source large models in a local environment.

Key Features

- One-Click Deployment: Quick deployment via simple installation scripts, supporting multiple operating systems.

- Model Management: Built-in model library supporting mainstream open-source models like Llama, Gemma, Qwen, and Mistral.

- Local Execution: All models and data run locally, ensuring data privacy and security.

- Modelfile Configuration: Use the

Modelfileto define model configurations and support custom model parameters. - REST API: Provides a standard API interface for easy integration with other applications.

Use Cases

- Developer Testing: Quickly test and debug large model applications in a local environment.

- Privacy-Sensitive Projects: Run models locally when handling sensitive data.

- Offline Environments: Run AI models in environments without network connectivity.

- Education & Research: An ideal tool for learning and researching large model technologies.

Main Advantages

Data Security: All models and data run locally, eliminating the need to upload to the cloud and ensuring data privacy.

Ease of Use: Run models with simple commands like ollama run <model>, requiring no complex configuration.

Resource Optimization: Supports model quantization, allowing large models to run on limited hardware resources.

Community Support: Backed by an active open-source community with continuous updates and maintenance, supporting multiple mainstream open-source models.

Cross-Platform: Supports macOS, Linux, and Windows systems, meeting the needs of diverse users.