Introduction: No Longer Just "Drawing Accurately," But "Thinking Clearly"

Over the past year, the field of AI image generation seemed to have fallen into a kind of "refinement fatigue" — models produced increasingly gorgeous images, but text rendering still failed, multilingual support was virtually nonexistent, and complex instruction following was hit-or-miss. This deadlock was not completely broken until OpenAI launched ChatGPT Images 2.0.

OpenAI defined this release as the "next evolution": a state-of-the-art model capable of undertaking complex visual tasks and generating precise, immediately usable visual content. Unlike the passive rendering logic of "you say, I draw" from before, the core transformation of Images 2.0 lies in incorporating the reasoning capabilities of the O-series models, giving image generation the characteristic of "strategic design" for the first time. In Sam Altman's words, this feeling is "like suddenly jumping from GPT-3 to GPT-5."

Pixel-Level Precision: An AI That Can Finally "Read the Text Clearly"

One of the biggest common ailments of past image models was their near-certain distortion when faced with small fonts, UI elements, icons, and dense typography. Images 2.0 has turned this into its killer feature.

The new model can accurately render extremely small text and properly arrange hierarchical relationships within complex layouts. In official demonstrations, the model even carved the words "GPT image 2" onto a single grain of rice, showcasing astonishing microscopic control. In the API, it supports output resolutions up to 2K, sufficient for print-grade materials and fine interface design needs.

More noteworthy is Images 2.0's grasp of "imperfect realism." It no longer solely pursues the over-smooth AI aesthetic; instead, it begins to reproduce the grain of 35mm film, the overexposure and motion blur of disposable cameras, and even strands of hair and the hem of clothes blowing in the wind. This understanding of "intentional design" rather than "approximate imitation" makes the output results truly commercially viable.

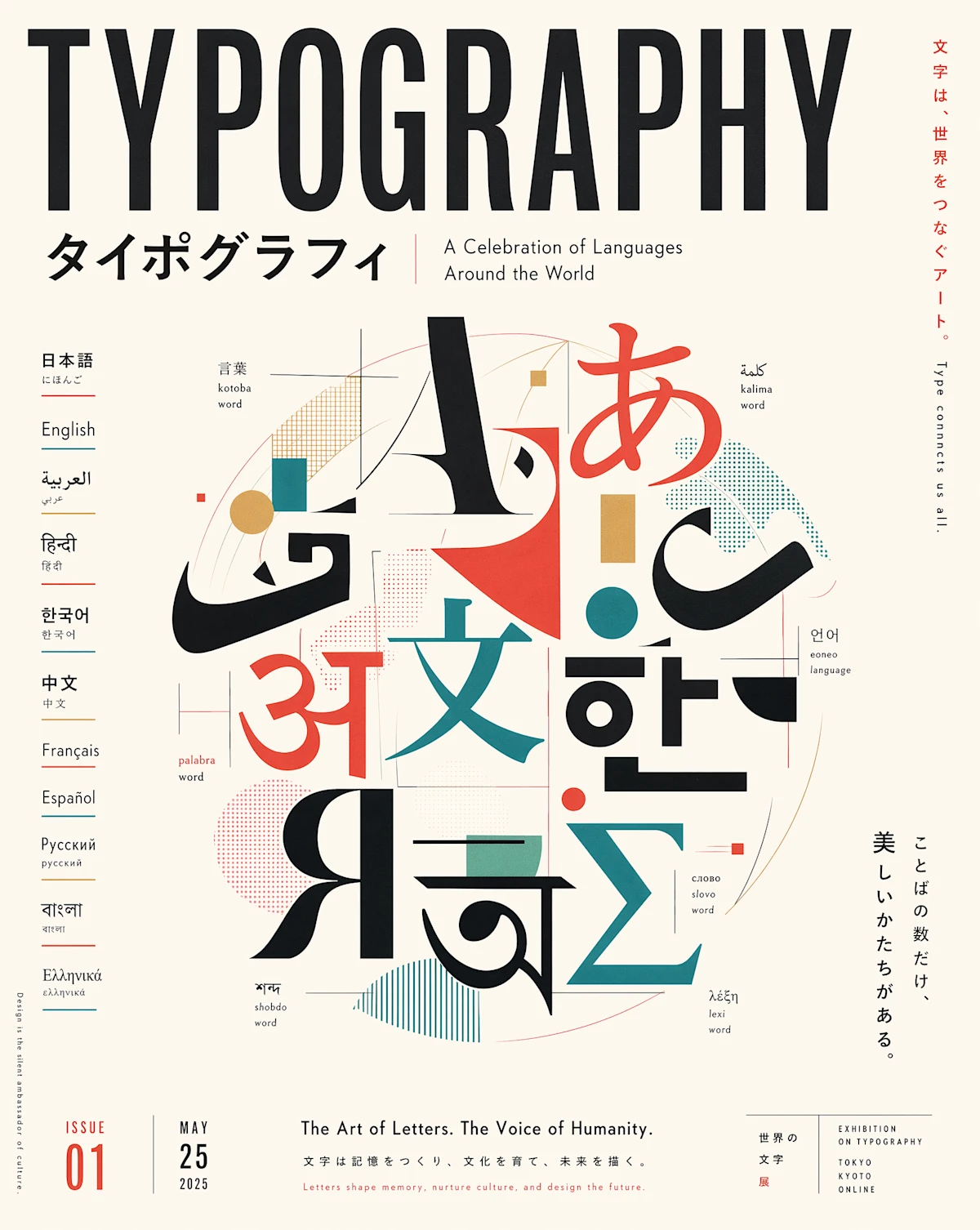

Multilingual Leap: Accurate Output in Chinese, Japanese, and Korean

If pixel-level precision solved the problem of "whether it can be used," then multilingual capability determines "who can use it."

Previous image models performed decently with English and Latin scripts, but when it came to complex scripts like Chinese, Japanese, Korean, Hindi, or Bengali, they often produced garbled, nonsensical text. Images 2.0 has achieved a qualitative leap in this area: it can not only correctly spell non-Latin characters but also ensure fluent sentences and natural typesetting, making language itself a part of the design element.

During the official launch livestream, OpenAI research scientist Boyuan Chen showcased an entire page of full-color Chinese comic strips telling the story of optimizing the model's Chinese text rendering. The comic included both densely typeset infographic text and self-deprecating internet memes like "稳稳地接住你" (a classic GPT phrase widely mocked by Chinese users). Behind this official self-mockery lies confidence in a deep understanding of multilingual scenarios.

Thinking Mode: The First Image Model That Can "Think"

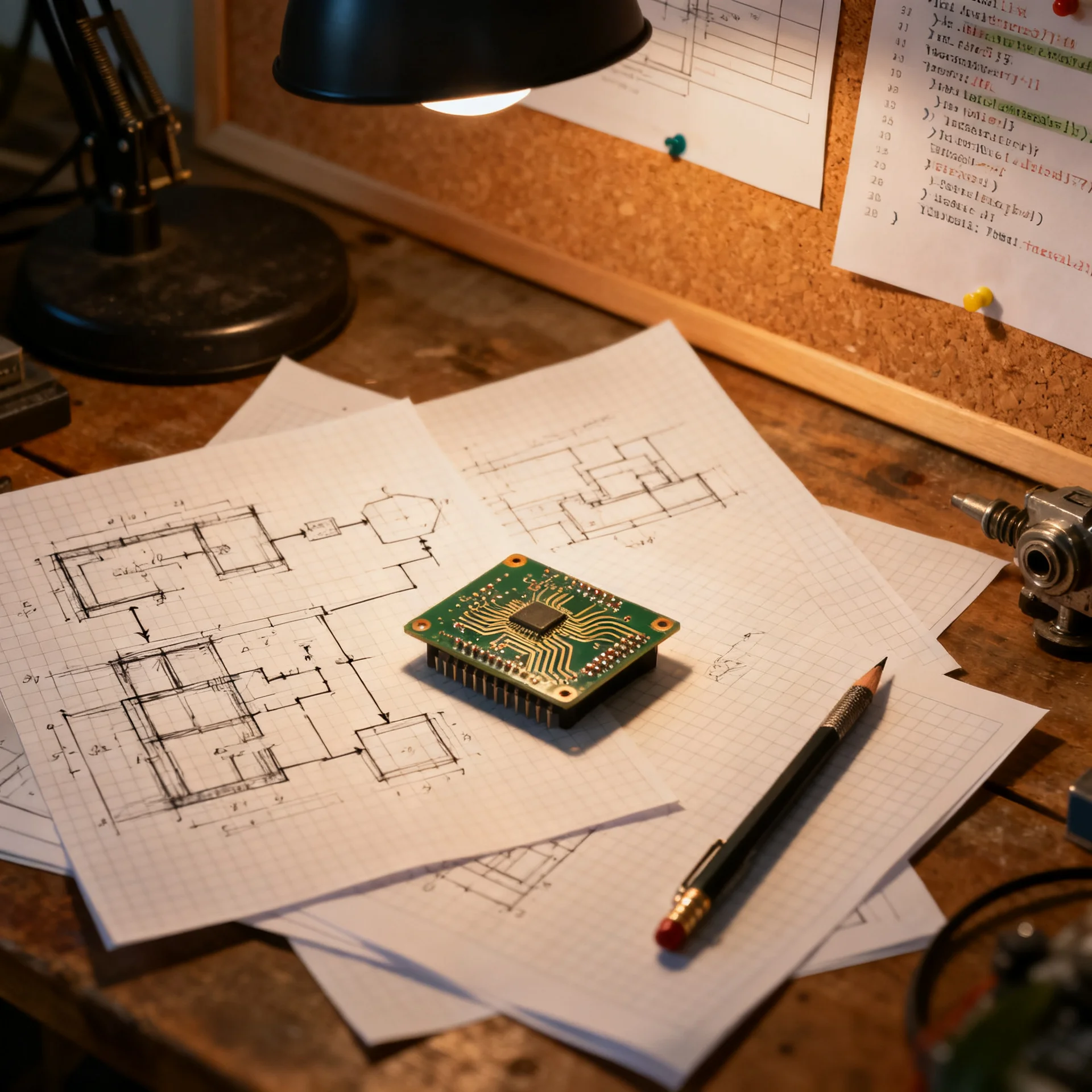

The most disruptive upgrade of Images 2.0 is hidden within "Thinking Mode." This is the first time in the industry that agentic reasoning capabilities have been systematically integrated into the image generation pipeline.

In Thinking or Pro mode, the model no longer directly starts drawing. Instead, it first undergoes an internal research and planning phase: parsing entity relationships in the prompt, conceiving image layouts, reasoning about visual hierarchy, and even searching online for real-time information to supplement knowledge when necessary. Subsequently, it can not only generate single images but also produce up to 8 multi-panel visuals with a consistent style, coherent characters, and progressive composition from a single prompt.

This means that for everything from social media multi-size asset packs to multi-page comic storyboards, from whole-house design plans to academic poster presentations, users no longer need to generate images one by one and manually stitch them together. A single prompt yields a complete workflow deliverable. In this process, Images 2.0 acts more like a "visual thinking partner," taking on much of the intermediate work from concept to finished product.

Full Rollout: ChatGPT, Codex, and API Opened Simultaneously

OpenAI clearly does not intend to keep this technology in the demo stage. On launch day, Images 2.0 was opened to all ChatGPT and Codex users, with advanced output featuring Thinking processes available to Plus, Pro, and Business subscribers. The underlying gpt-image-2 model was also simultaneously released into the API, allowing developers to integrate it into their own products.

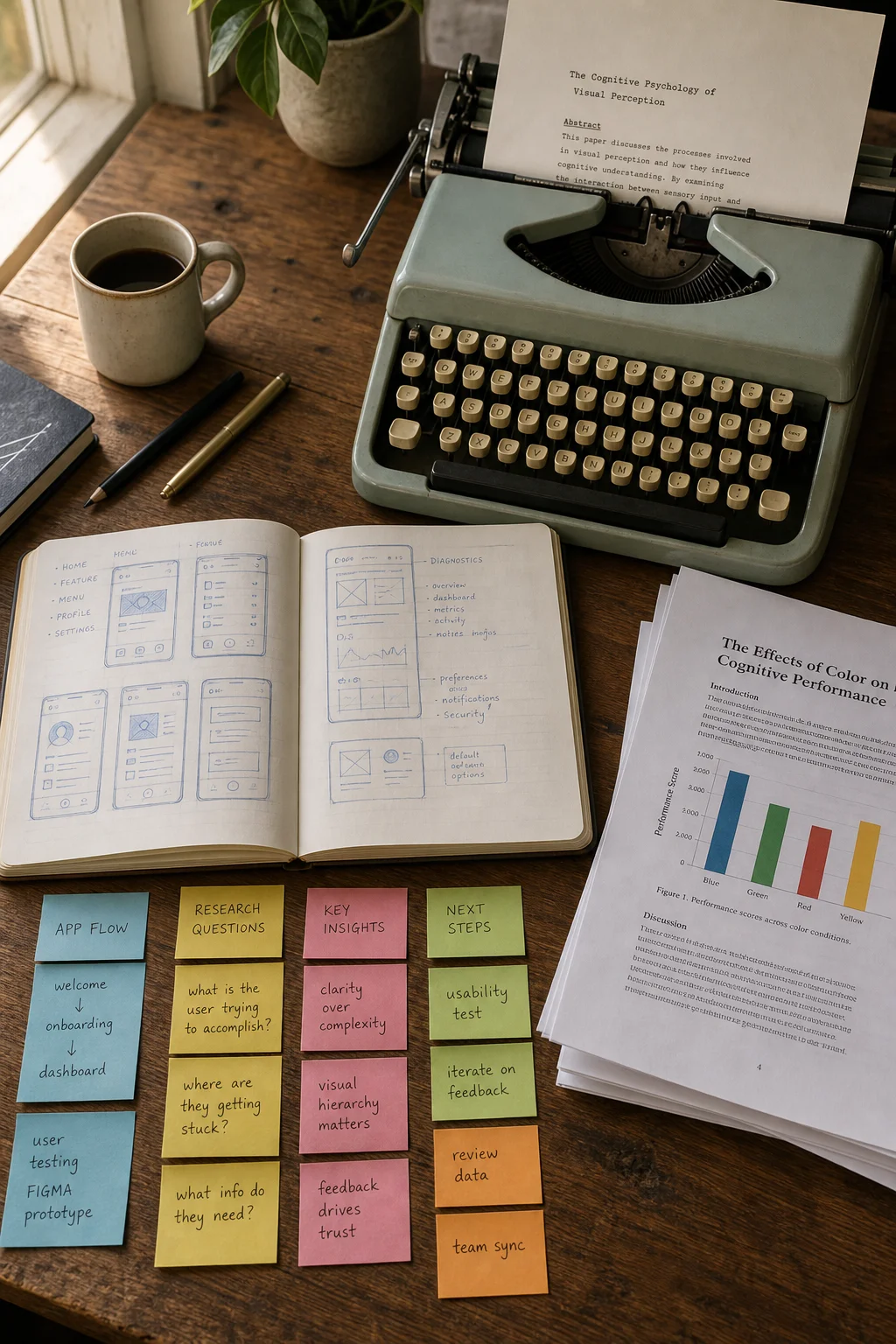

Within the Codex workflow, image generation, code, design, and iteration are integrated into the same space. Designers can quickly generate multiple UI directions and prototypes, compare options, and directly convert the best design into a webpage or product experience without switching back and forth between different tools.

In terms of pricing, gpt-image-2 continues the token-based billing logic, with Image Output prices slightly reduced compared to the previous generation. For cost-sensitive scenarios, developers can still call a lighter version of the model for batch previews and draft iterations.

Competitive Benchmarking: Three First-Place Finishes in Arena, Significant Gap

In blind tests on the third-party evaluation platform Image Arena, Images 2.0 was tested under the codename "TapeDuck" before its release. After its official unveiling, it topped three core leaderboards by a significant margin: text-to-image, single-image editing, and multi-image editing, ranking first in all three. In the text-to-image category, it led the second place by 242 points, described by Arena as "the largest gap ever seen."

This achievement not only validates Images 2.0's breakthrough in complex instruction following and consistency but also marks OpenAI's regaining of technical influence in the visual generation field.

Limitations and Sober Reflections: The Revolution Is Not Yet Complete

Despite the leaps in capability, OpenAI still honestly listed the model's limitations in its official blog: For tasks requiring complete physical world modeling (such as origami tutorials, complex structures like Rubik's cubes), and precise details of hidden, oblique, or reverse surfaces, the model may still be inadequate; extremely high-density or repetitive details (like fine sand) also pose challenges; diagrams requiring precise arrows or component annotations are still recommended for manual proofreading.

Additionally, ultra-high-resolution output exceeding 2K is currently in the testing phase and may exhibit instability. These boundaries remind us: Images 2.0 has crossed the "toy" stage, but in high-precision industrial design and rigorous scientific visualization, human-machine collaboration remains a necessary path.

Conclusion: Has the "iPhone Moment" for Image Generation Arrived?

From DALL·E to Midjourney, and now to ChatGPT Images 2.0, AI image generation has traversed a long journey from "amazing" to "practical." The unique value of Images 2.0 lies not in its ability to outperform competitors on any single metric, but in being the first to bundle "reasoning," "multilingual support," "pixel-level control," and "workflow integration" into a deployable productivity tool.

When designers can use a single prompt to get a set of style-consistent, text-accurate, and ready-to-use e-commerce posters; when content creators no longer need to toggle back and forth between Photoshop and translation software for an infographic — we may be witnessing a critical turning point where AI image generation moves from "creative assistance" to "production infrastructure."

Of course, the rapid advancement of technology always brings societal discussions about creator rights and job displacement. For OpenAI, figuring out how to make people truly trust and harness this capability may be more difficult than getting it to generate a grain of rice with engraved text.

Affordable Model Access

Still troubled by model selection and integration debugging? LinkThinkAI offers you a one-stop solution.

We now fully support cutting-edge models like DeepSeek-V4, GPT-5.5, and GPT-Image-2. Through our API, which is uniformly aligned with the OpenAI style, you can quickly switch and go live by simply changing the Base URL, greatly reducing integration and migration costs.

Register now and get an exclusive 25% discount on GPT series models via our platform, helping you experience top-tier model capabilities at a lower cost.

Our platform integrates multiple suppliers and multimodal capabilities, providing:

- Flexible Routing: Supports channel, group, and fallback strategy configurations to ensure high service availability.

- Clear Costing: Clear budgeting and billing through model multipliers, usage statistics, and grouping strategies.

- Simple Integration: Steps from account creation to the first successful call are clear and straightforward.

Say goodbye to tedious individual integrations. Manage all models with one document and one API key. Visit https://linkthinkai.com now to start an efficient, stable, and cost-effective model calling journey.